Introduction to Decision Trees in Modern Technology

Decision trees are one of the most popular algorithms used in machine learning for solving classification and regression problems. In fields like Data Science and AI, they help in making decisions based on data patterns. Their simple structure makes them easy to understand, especially for beginners working with Python.

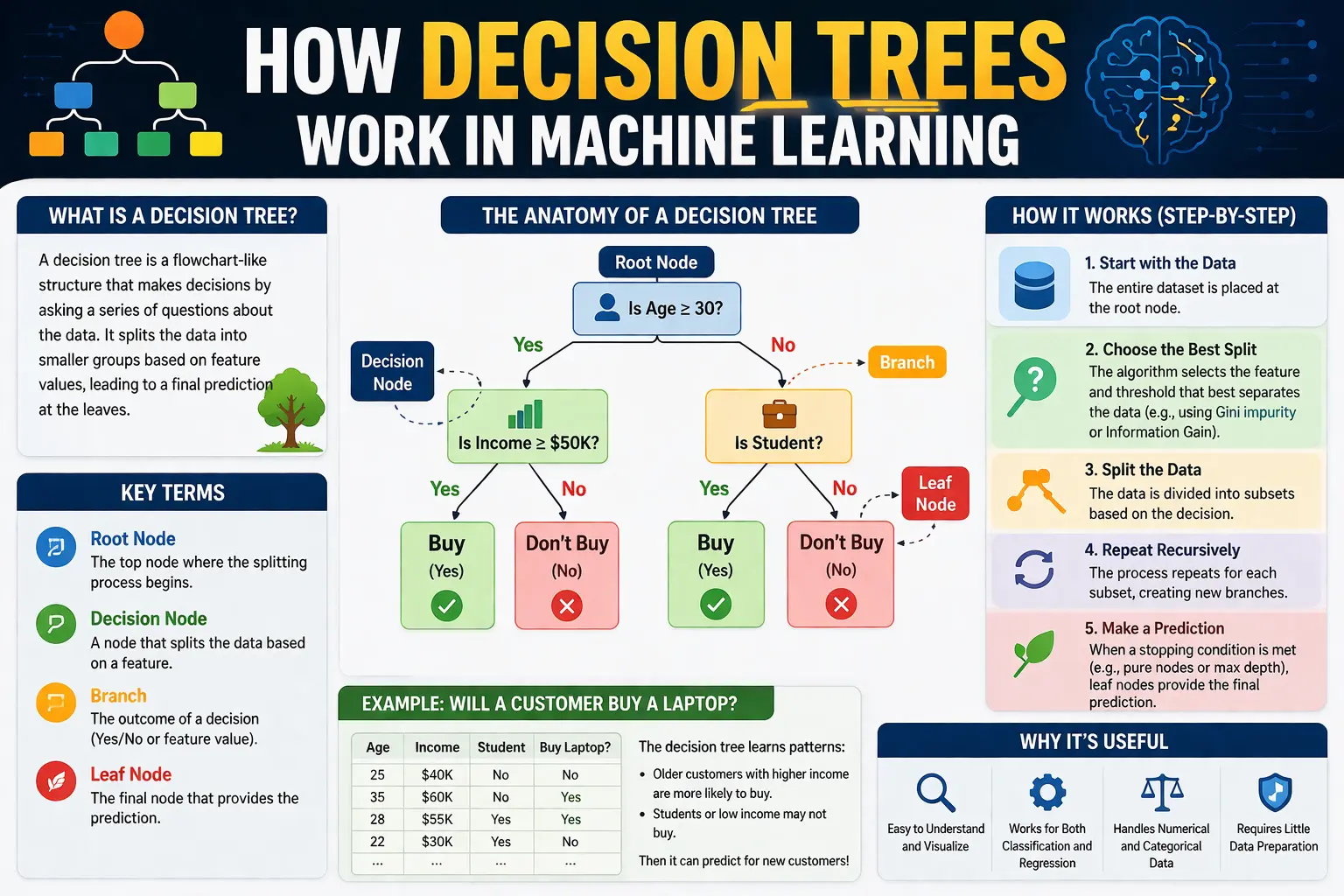

What is a Decision Tree Model

A decision tree is a flowchart-like structure where each internal node represents a condition, each branch represents an outcome, and each leaf node represents a final decision. In data analytics, this model helps break down complex problems into smaller, manageable parts, making analysis more efficient.

How Decision Trees Work Step by Step

Decision trees work by splitting the dataset into smaller subsets based on specific conditions. The algorithm selects the best feature to split the data at each step. In machine learning, this process continues until the model reaches a conclusion or no further splits are possible, resulting in a tree-like structure.

Key Components of Decision Trees

The main components of a decision tree include the root node, decision nodes, branches, and leaf nodes. In Data Science, understanding these components is essential for building accurate models. Each part plays a role in guiding the decision-making process based on input data.

Splitting Criteria in Decision Trees

To decide how to split the data, decision trees use criteria such as Gini Index, Entropy, and Information Gain. These measures help determine the best feature for splitting. In AI applications, choosing the right splitting method improves the accuracy and performance of the model.

Advantages of Decision Trees

Decision trees offer several advantages, including simplicity, interpretability, and flexibility. In data analytics, they are easy to visualize and understand, even for non-technical users. They can handle both numerical and categorical data, making them versatile tools in machine learning projects.

Limitations of Decision Trees

Despite their benefits, decision trees also have some limitations. They can become complex and overfit the data if not properly controlled. In Data Science, techniques like pruning are used to reduce overfitting and improve model performance.

Implementation of Decision Trees Using Python

Decision trees can be easily implemented using Python libraries such as Scikit-learn. With just a few lines of code, beginners can build and train a model. This makes decision trees a great starting point for those learning machine learning and working on practical projects.

Real-World Applications of Decision Trees

Decision trees are used in various industries for tasks like customer segmentation, fraud detection, and medical diagnosis. In AI systems, they help in making data-driven decisions quickly and effectively. Their practical applications make them an important tool in data analytics.

Integration with Data Visualization Tools

Decision tree results can be visualized using different tools to make them easier to interpret. In Power BI, data processed using decision trees can be displayed through dashboards and charts. This helps in presenting insights clearly to stakeholders.

Best Practices for Using Decision Trees

To get the best results, it is important to follow best practices such as selecting the right features, avoiding overfitting, and validating the model. In Data Science, proper tuning of decision trees ensures better accuracy and reliability in predictions.

Conclusion: Understanding Decision Trees for Better Insights

Learning how decision trees work is essential for anyone interested in Data Science, AI, Power BI, machine learning, data analytics, and Python. They provide a simple yet powerful way to analyze data and make decisions. By mastering this algorithm, beginners can build a strong foundation in machine learning and advance their skills in data-driven technologies.