Introduction to Gradient Descent

Gradient Descent is one of the most important optimization techniques used in machine learning. It helps models learn by minimizing errors and improving accuracy over time. In fields like Data Science and AI, this method is widely used to train models efficiently using Python.

Understanding the Basic Concept

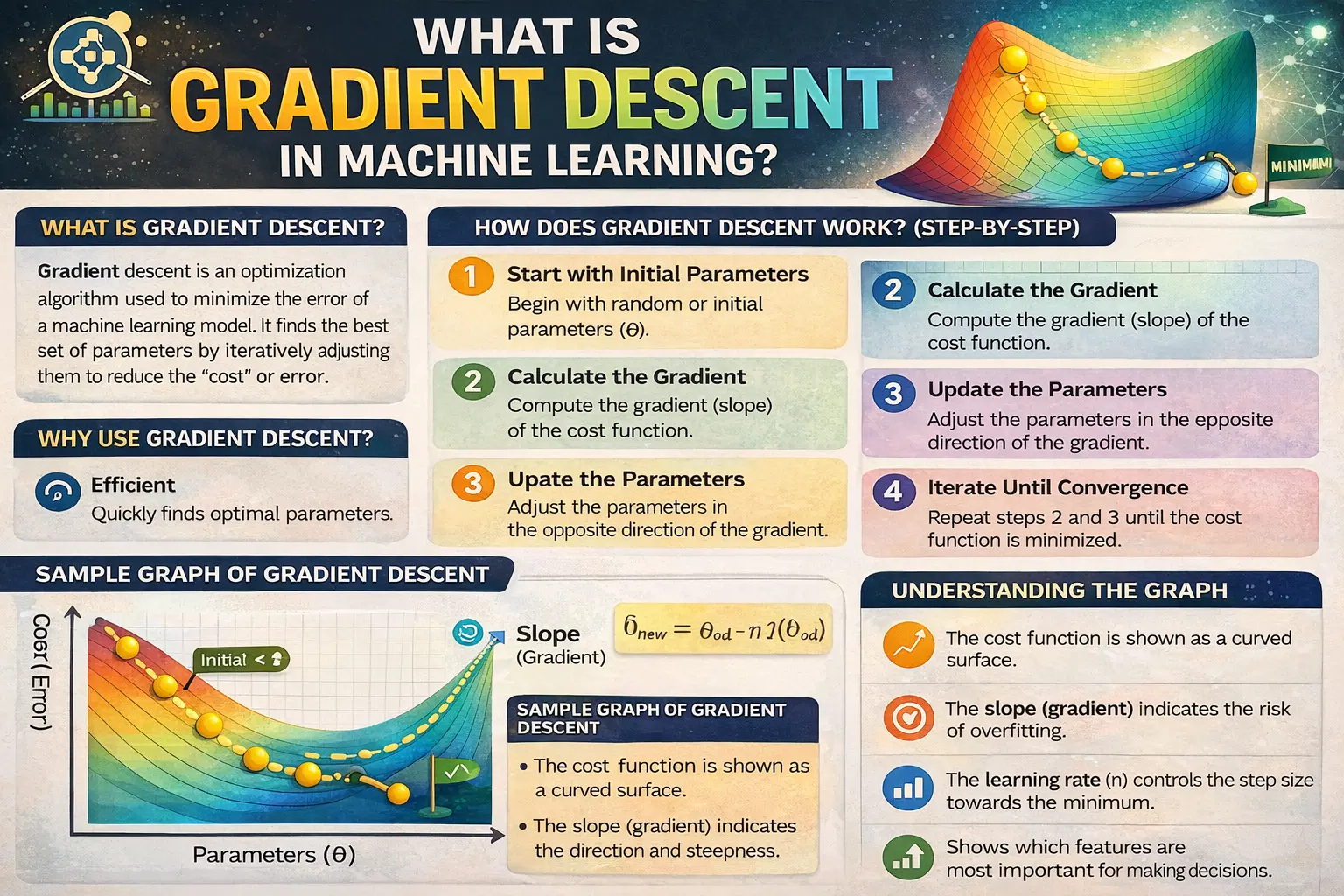

Gradient Descent works by finding the lowest point of a function, often referred to as the minimum. In data analytics, this means reducing the difference between predicted and actual values. The algorithm adjusts model parameters step by step to reach the best possible outcome.

How Gradient Descent Works

The algorithm starts with an initial guess and gradually updates the values based on the slope of the function. In machine learning, this slope is called the gradient. By moving in the opposite direction of the gradient, the algorithm minimizes the error and improves model performance.

Role of Learning Rate

The learning rate is a crucial factor in Gradient Descent. It determines how big a step the algorithm takes toward the minimum. In Data Science, choosing the right learning rate is important because a very high value can overshoot the solution, while a very low value can slow down the process.

Types of Gradient Descent

There are three main types of Gradient Descent: Batch Gradient Descent, Stochastic Gradient Descent, and Mini-Batch Gradient Descent. In AI applications, each type has its own advantages depending on the size of the dataset and computational resources available.

Importance of Machine Learning Models

Gradient Descent is essential for training many machine learning models, such as linear regression and neural networks. In data analytics, it ensures that models continuously improve by reducing errors during training. Without it, achieving accurate predictions would be difficult.

Advantages of Gradient Descent

One of the key advantages of Gradient Descent is its simplicity and efficiency. In Data Science, it can handle large datasets and complex models effectively. It is also flexible and can be applied to various types of machine learning problems.

Challenges and Limitations

Despite its usefulness, Gradient Descent has some challenges. It may get stuck in local minima or take time to converge. In machine learning, careful tuning of parameters like the learning rate is required to achieve optimal performance.

Implementation Using Python

Gradient Descent can be implemented easily using Python libraries such as NumPy and TensorFlow. These tools allow beginners to experiment with optimization techniques and understand how models learn in Data Science projects.

Real-World Applications

Gradient Descent is used in many real-world applications such as recommendation systems, image recognition, and predictive analysis. In AI systems, it plays a key role in improving the accuracy and efficiency of models used in data analytics.

Integration with Visualization Tools

Results from Gradient Descent can be visualized to better understand how the algorithm converges. In Power BI, processed data and model outputs can be displayed through interactive dashboards, helping users interpret results effectively.

Conclusion: Importance of Gradient Descent

Understanding Gradient Descent is essential for anyone working in Data Science, AI, Power BI, machine learning, data analytics, and Python. It is a fundamental concept that powers many machine learning models and helps achieve accurate predictions. By mastering this technique, beginners can build a strong foundation in data-driven technologies.